We live in a digital economy, software has shifted from being a driver of nominal efficiency gains, to an enabler of new customer experiences and markets. As organizations aggressively convert their business processes, the software delivery chain is becoming an increasingly attractive target for those looking to reach end-user customers.

Traditionally, the supply chain has most commonly been applied to physical processes in the world of manufacturing, raw materials and natural resources. Yet the common definition as "a system of organizations, people, activities, information, and resources involved in moving a product or service from supplier to customer" offers clear parallels to the software delivery process. The recent industrial shift towards DevOps, which applies pipelines and delivery chains to software development, has aligned software delivery even more closely to the term. By looking at software delivery through this 'supply chain' lens, we can start to see potential risks that previously remained under the radar.

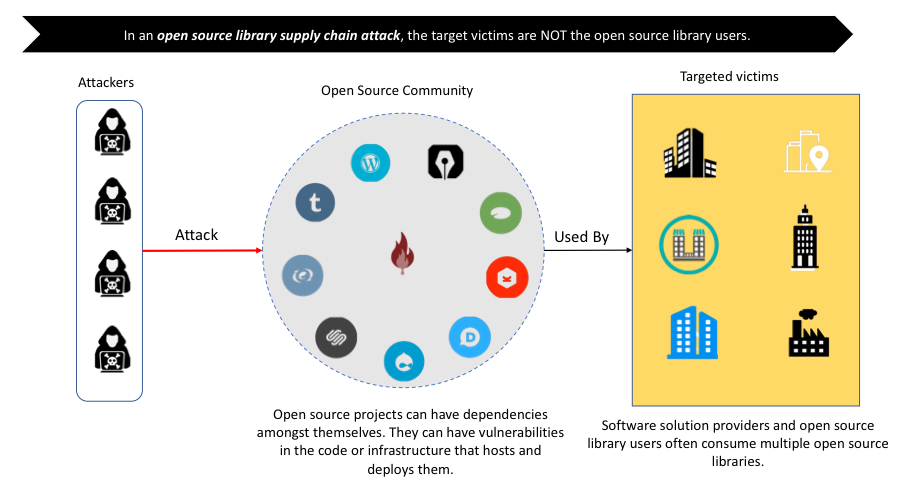

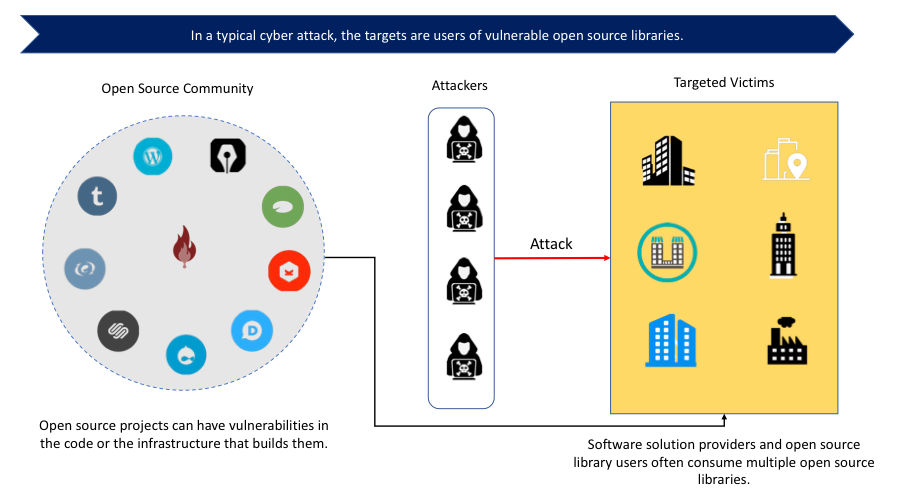

Given the novelty of software development being a software supply chain, there is an understandable lack of awareness of the type of risks associated, compared to other cyber security attacks. Software-based supply chain attacks are designed to exploit the trust afforded to partners and sources in the software supply chain; as opposed to the exploitation of vulnerabilities in software. This means they are often hidden, and less direct, with their intended victim typically being downstream of the supply chain; rather than being at ground zero.

It is in this context that we are seeing a rise in a very specific type of software supply chain attack—OSSL Trust Attacks—which leverage Open Source Software Libraries (OSSLs). In this blog, I will talk through how OSSLs are being abused, and how we might act to stem the tide.

The prevalence of OSS code in modern software

OSSLs are widely used across enterprises, providing the foundational code on which today's businesses are built. Long gone are the days when one individual would write all the code for a piece of software from scratch. In fact, modern software is comprised of, on average, 79% reusable libraries from open source projects and only 21% custom code. This code is then absorbed and deployed into live production, where it is consumed by the organization's customers.

Even closed environments use open source code. According to a 2018 Open Source Security and Risk Analysis (OSSRA) report, 96% of the applications scanned contained open source components, with many applications containing more open source than proprietary code. Software security company, and open source veteran, Sonatype reported that the average application consists of 106 open source components. In fact, most of today's software systems and products are built on software that is supplied by various third-party open source providers.

OSSLs often run with the same privilege granted to an organization's custom code, without any constraints or checks. The pervasiveness of OSSLs means we have grown accustomed to trusting them implicitly. Newer generations of developers and engineers have been raised on the assumption that the legitimacy of OSSLs from established sources is unquestionable. As such, developers and engineers overlook the need for appropriate and preemptive security controls and mechanisms.

Is our blind trust in OSSLs misplaced?

In an ideal world, this system would be flawless, and our implicit trust would be well-placed: but we do not live in an ideal world. OSSLs are created all over the world, authored by both known entities and anonymous individuals. There is no single 'owner' or moderator, no proper identification or trust verification. Instead, the community shares responsibility for ensuring the quality of code through use. Yet, often that crowd funding of opinion is more focused on whether the code performs effectively and is useful; there is less attention focused on whether the code has been manipulated. But this is exactly what we are seeing today.

If an attacker is able to modify code stored in an open source library, then they can insert malicious code. This code is then picked up and used by the trusting OSSL community, who insert the malicious code into products which then makes its way into production. So, while it's the OSSL that has been breached, it is the OSSL community, and potentially their customers that are ultimately impacted. Another worrying trend we are seeing is the emergence of rogue OSSLs run by hackers. As companies are using so many OSSLs it is increasingly hard to distinguish which are and aren't legitimate. In these instances, the attacker doesn’t even need to hack into an OSSL.

OSSL Trust Attacks rely on the fact companies trust OSSLs, using OSSLs as a vehicle to distribute malicious code downstream of the supply chain, while staying at arm's length; rather than simply targeting the OSSL community users directly. Trust of the OSSL is propagated from one node to another implicitly—i.e. the chain of trust is exploited, from the developer trusting the OSSL, to the end customer or employee trusting the software (as it's from a legitimate source), so that the effect is cascaded down the chain.

SolarWinds: Anatomy of a Supersonic Supply Chain Attack

The rise and rise of the OSSL Trust Attack

2018 was a fruitful year for OSSL Trust Attacks, which speaks to the fact that not only have hackers seen this opportunity, but they also know it's a proven and tested route to success. For instance, event-stream, an open source JavaScript library with 2 million downloads was injected malicious code that can steal bitcoins from unwitting wallets. It was widely used by Fortune 500 companies and small startups alike.

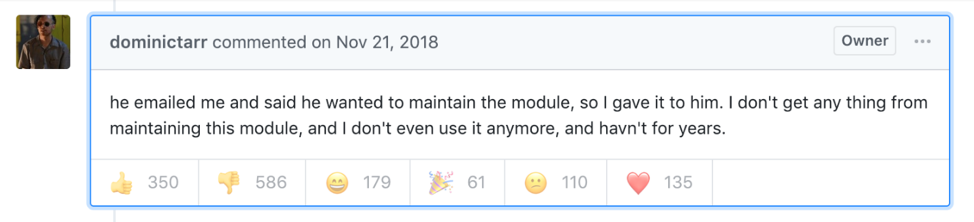

Such notable popularity, however, was not earned through securitized and tested credibility offered by the backend management system. According to the initial report on the GitHub repository of event-stream, the ownership was casually handed off to a stranger by the original owner, just because the stranger asked for it.

Figure 1. Github communications from event-stream library's original creator and owner regarding the ownership transfer

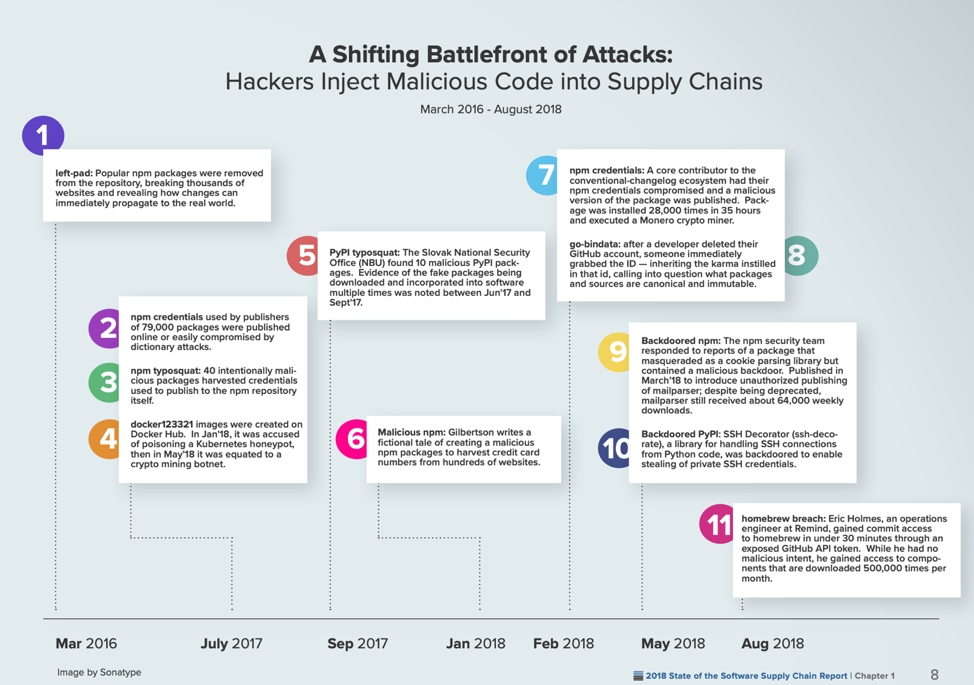

Sonatype recorded a total of 11 incidents where attackers leveraged this OSSL Trust Attack, out of which, six took place in the year 2018, shown in Figure 2.

Figure 2. Sonatype's recorded open source supply chain attacks

What distinguishes an OSSL Trust Attack from other software security attacks?

As mentioned previously, one of the defining characteristics of an OSSL Trust Attack that sets it apart from other software security attacks is that the organization that is initially breached—i.e. the OSSL host—is not the intended target/victim. The hacker is just using the OSSL as a trojan horse, exploiting the trust of the community in order to disseminate their malware. If the OSSL code the organization had knowingly used in its systems was later found to have vulnerabilities, which were then exploited by an attacker, this is not considered to be an OSSL Trust Attack; but a rather regular software security attack.

The key distinguishing point is that in a software supply chain attack, the victim is often not the direct target of the attack; while in a regular attack, the direct target is the intended victim.

The indirect nature of the attack makes it hard to assign responsibility, arising from a lack of ownership of the problem. Since the party that bears the vulnerability (i.e. the OSSL) is not the direct victim of the attack, there is little incentive for it to ensure the security and trustworthiness of the third-party software it uses. For the victims, most of them do not have knowledge that the software, be it proprietary or open source, they are using is insecure or untrustworthy. In the rare cases where they do have such knowledge, there is little they can do except abandoning the integrating software altogether.

How can we prevent OSSL Trust Attacks?

There has been little discussion around the potential solutions to this problem. We have only just begun to realize there is a big security risk due to the lack of proper software identity management in the open source community. With the vast amount and variety of software born and raised in the open source environment, a defense solution that addresses to a specific language, framework, and technology will not scale. The attacks we have witnessed so far targeted not only Python, but also JavaScript, Docker images and Homebrew. This is a systemic problem that demands a systematic solution for the open source software delivery and distribution.

The open source world is comprised of many smaller communities that are self-organized. It is likely that any plausible solution will take time to implement. But before the infrastructure and ecosystem mature, open source software delivery and distribution will remain vulnerable to OSSL Trust Attacks. So, this is an area that requires urgent attention and discussion.

One solution available that would help address the issue of rogue OSSLs is code signing. Code signing has been used as an effective security control in software deployment and distribution to ensure the authenticity of the publisher and the integrity of the software package since the early 90s. Major operating systems such as Windows, Mac OS X, Android, iOS all have supportive validation systems built-in to inform the end users the provenance of software package and its publisher’s identity in collaboration with the existing Certificate Authorities within the PKI ecosystem.

With a sophisticated code signing control built in to the open source package management systems, such as Python’s official repository PyPI, a doppelgänger Colourama package would not be able to hijack the legitimate Colorama package. Sonatype, in its recently published report on the State of Software Supply Chain 2018, highlighted that “DevOps teams are 90% more likely to comply with open source governance when policies are automated”. This is an encouraging observation that if code signing is factored into the OSS governance apparatus, with the shift towards DevOps, organizations will benefit tremendously from the fortified trust that is now easily abused.

Admittedly, code signing has its limitations, amongst which is that it does not prevent trust escalation attacks where degrees of trust are placed on different entities and different software and code. By design, it cannot ensure that the content of a software package with a legitimate and trustworthy signing identity is free of vulnerabilities or malicious code. In the cases where the private key to the legitimate certificate is compromised, an attacker can replace the legitimate software content with whatever she prefers. Despite the imperfections code signing bears, similar to PKI and TLS certificates for an encrypted and more trusting web, it remains a tested means that’s worthy to be explored to fortify the trust in OSS supply chains.

Related posts

- Attackers Misused Code-Signing Certificates of Taiwanese Companies to Spread Plead Malware

- Code Signing Certificates: A Dark Web Best Seller

- Crypto Mining, Code Signing Compromise: Are Your Certificates Safe?

- The CCleaner Compromise: Was a Code Signing Certificate the Culprit?

2024 Machine Identity Management Summit

Help us forge a new era in cybersecurity

TICKETS ON SALE | Let's get fired up! 🔥 Grab your ticket today and save up to $200 with limited-time Early Bird deals.